Will Robots Fight Our Next Great War?

By James Donahue

Elon Musk, a man deeply entrenched in artificial intelligent research, and many of his cohorts are asking this very question. In fact, Musk, Apple’s Steve Wozniak, Google executive Demis Hassabis and even the late physicist Stephen Hawking joined a group of over 1,000 experts and robotics researchers in 2015 to sign an open letter calling for a ban on military AI development and autonomous weapons.

Military strategists may argue that using robots capable of making intelligent decisions into front-line battle might make the battlefield a safer way to fight a war. But think about that for a moment.

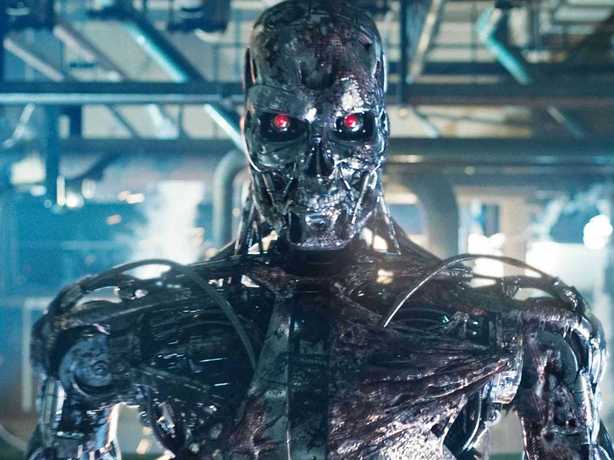

Robots that can think fast enough to win a war, likely out-think the very men who invented them, and possess the capability of reason, will waste no time in turning against their creators. While researchers say they would in some way put preventative measures in the “minds” of these machines, can we trust such measures to really work; especially in robots designed to do open battle in warfare? What will stop such machines from literally taking over the world and possibly destroying the human race?

In the letter, the authors describe autonomous weapons as the “third revolution in warfare, after gunpowder and nuclear arms.” These machines would be capable of selecting targets and operating without direct human control. The warning is that “autonomous weapons will become the Kalashnikovs of tomorrow.“

For those who don’t know the history of Mikhail Kalashnikov, he was the Russian arms maker who designed and sold the deadly assault weapons now making headlines around the world.

Both Musk and Hawking are warning that the development of full artificial intelligence in robots is such a dangerous concept that once unleashed, these thinking war machines could “spell the end of the human race.” Indeed, machines capable of reason and plotting to defeat an enemy on the battlefield could also be capable of destroying the very people who create them. Any human ever seeking to control them might well be perceived as an enemy combatant.

Representatives of this opposition group and the letter were presented at a United Nations conference in Geneva where discussion centered on the future of weaponry. The subject of “killer robots” also was on the agenda. In the end, however, the United Kingdom opposed a ban on the development of these autonomous weapons.

That was in 2015. Since then the development of these deadly killing machines has been proceeding at what we can only guess is at fever pitch. The advent of military drones flown remotely that are capable of bombing, photographing and delivering messages and packages is well known. Robot controlled cars, trucks, ships and even aircraft are becoming reality even as these words are being formed. The development of drones that can locate and strike an enemy without deferring to a human controller may be just around the corner . . . if it doesn’t already exist.

It doesn’t take a genius to understand that handing the machines the power over who lives and dies is crossing a dangerous moral line. It is said that an automated sentry now standing guard of South Korea’s border with North Korea is capable of spotting, and tracking targets up to four kilometers away. If it can do this is may be easy to give it the power to aim a weapon at this target and pull the trigger.

Last year Stuart Russel, an AI researcher at the University of California in Berkeley, was at the United Nations Conventional on Conventional Weapons, hosted by the Campaign to Stop Killer Robots. He was present with others to present a troubling film depicting the kinds of danger such weapons can produce.

The military film unveils a tiny drone that hunts and kills with ruthless efficiency. In the film the drone falls in the wrong hands and it unleashes unstoppable death and destruction. People were cut down on the open streets and the machine could not be brought down.

Russell said the technology illustrated in the film is already available. He said the film depicted “an integration of existing capabilities. It is not science fiction. It is easier to achieve than self-driving cars, which require far higher standards of performance.”

By James Donahue

Elon Musk, a man deeply entrenched in artificial intelligent research, and many of his cohorts are asking this very question. In fact, Musk, Apple’s Steve Wozniak, Google executive Demis Hassabis and even the late physicist Stephen Hawking joined a group of over 1,000 experts and robotics researchers in 2015 to sign an open letter calling for a ban on military AI development and autonomous weapons.

Military strategists may argue that using robots capable of making intelligent decisions into front-line battle might make the battlefield a safer way to fight a war. But think about that for a moment.

Robots that can think fast enough to win a war, likely out-think the very men who invented them, and possess the capability of reason, will waste no time in turning against their creators. While researchers say they would in some way put preventative measures in the “minds” of these machines, can we trust such measures to really work; especially in robots designed to do open battle in warfare? What will stop such machines from literally taking over the world and possibly destroying the human race?

In the letter, the authors describe autonomous weapons as the “third revolution in warfare, after gunpowder and nuclear arms.” These machines would be capable of selecting targets and operating without direct human control. The warning is that “autonomous weapons will become the Kalashnikovs of tomorrow.“

For those who don’t know the history of Mikhail Kalashnikov, he was the Russian arms maker who designed and sold the deadly assault weapons now making headlines around the world.

Both Musk and Hawking are warning that the development of full artificial intelligence in robots is such a dangerous concept that once unleashed, these thinking war machines could “spell the end of the human race.” Indeed, machines capable of reason and plotting to defeat an enemy on the battlefield could also be capable of destroying the very people who create them. Any human ever seeking to control them might well be perceived as an enemy combatant.

Representatives of this opposition group and the letter were presented at a United Nations conference in Geneva where discussion centered on the future of weaponry. The subject of “killer robots” also was on the agenda. In the end, however, the United Kingdom opposed a ban on the development of these autonomous weapons.

That was in 2015. Since then the development of these deadly killing machines has been proceeding at what we can only guess is at fever pitch. The advent of military drones flown remotely that are capable of bombing, photographing and delivering messages and packages is well known. Robot controlled cars, trucks, ships and even aircraft are becoming reality even as these words are being formed. The development of drones that can locate and strike an enemy without deferring to a human controller may be just around the corner . . . if it doesn’t already exist.

It doesn’t take a genius to understand that handing the machines the power over who lives and dies is crossing a dangerous moral line. It is said that an automated sentry now standing guard of South Korea’s border with North Korea is capable of spotting, and tracking targets up to four kilometers away. If it can do this is may be easy to give it the power to aim a weapon at this target and pull the trigger.

Last year Stuart Russel, an AI researcher at the University of California in Berkeley, was at the United Nations Conventional on Conventional Weapons, hosted by the Campaign to Stop Killer Robots. He was present with others to present a troubling film depicting the kinds of danger such weapons can produce.

The military film unveils a tiny drone that hunts and kills with ruthless efficiency. In the film the drone falls in the wrong hands and it unleashes unstoppable death and destruction. People were cut down on the open streets and the machine could not be brought down.

Russell said the technology illustrated in the film is already available. He said the film depicted “an integration of existing capabilities. It is not science fiction. It is easier to achieve than self-driving cars, which require far higher standards of performance.”