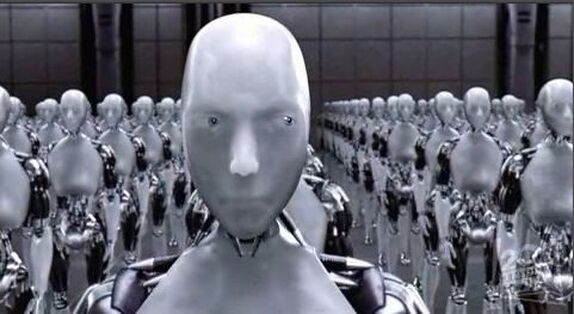

- Will Machines Someday Rule?

By James Donahue

There is something interesting occurring in our contemporary world.

--The human race is recklessly polluting the air, water and land and using up the planet’s natural resources so completely that it appears we are heading for an early extinction.

--While this is going on, the development of intelligent robotic machines is reaching a point where the machines are beginning to think for themselves.

Of course this leads to an interesting idea. Is it possible that once robots learn to reason and replace broken parts, repair each other and even build new and better replicas of themselves that they will continue on to rule this planet long after intelligent life on it is dead?

This is not as crazy an idea as it may sound at first glance. Louis Del Monte, physicist and author of the book The Artificial Intelligence Revolution, believes that the day is soon coming when machines will become self-conscious, have the ability to protect themselves, and may even view humans as “an unpredictable and dangerous species.”

In a recent interview with Dylan Love for an article in Business Insider, Del Monte warned that most humans will have become part human and part machine within the next century. We will turn ourselves into cyborgs in our quest for immortality and our need to survive in a deadly environment. This also may be necessary for us to be capable of traveling in space and exploring other planets in the universe.

Because we are animals, we cannot leave Earth without taking all of the ingredients needed to sustain life along with us. Machines don’t need food, water and air to survive. A handy oil can, a few tools and a solar energy receiver may be all they will need to keep going.

That is the positive scenario. Del Monte also offers a warning that the machines may regard themselves as superior to us, mostly because their computer wiring will allow them to think and solve problems faster than the human brain. Also, machines will lack compassion, which will make them great soldiers with the ability to crush any creature that gets in their way.

They “might view us the same way we view harmful insects,” Del Monte warned. He noted that humans do dangerous things like start wars, build weapons powerful enough to destroy our world, and make computer viruses. The machines that operate on computer technology would regard the latter as a serious threat.

Robotic machines already are developing artificial intelligence. For instance, Del Monte notes that a human pacemaker actually uses sensors and a form of artificial intelligence to regulate the human heart.

He said experiments in Lausanne, Switzerland, have shown that robots designed to cooperate in finding beneficial resources quickly learned to lie to each other in order to hoard the resources for themselves. This is, in a primitive sense, an early form of self- preservation.

It seems that in our rush to develop robots to serve industrial and commercial needs, we may be forgetting the famous three laws of robotics developed by science fiction writer Isaac Asimov. He outlined the laws in the 1942 short story Runaround. They are:

--A robot may not injure a human being, or, through inaction, allow a human being to come to harm.

--A robot must obey the orders given to it by human beings, except where such orders would conflict with the first law.- --A robot must protect its own existence as long as such protection does not conflict with the first or second law.